Introduction

Man Group is a global, alternative investment manager with over 35 years of systematic trading experience. A third of our workforce are technologists, engineers, quants and data scientists. Our Python Platform team is responsible for providing common tools and infrastructure that support hundreds of Python developers daily.

This article is a continuation of the journey to make pip faster. After improving pip itself last year, I continued to investigate performance bottlenecks in the Python interpreter: I/O code, buffering logic, compression libraries.

The core problem: Python’s compression code has high overhead and relies on unoptimized C libraries (zlib). We can’t get `pip install` to be fast and to compete with `uv` unless we can get state-of-the-art compression in Python.

pip has a fundamental constraint as the standard package manager for Python, it’s limited to using standard libraries bundled with the Python interpreter (and a handful of pure-Python libraries that are allowed to be bundled with pip).

To make pip faster, one must make Python faster. That’s why I pushed for the integration of zlib-ng into Python.

I am pleased to announce that Python has switched to zlib-ng for faster compression and decompression, starting with the Python 3.14.0 release for Windows at the end of 2025.

The headline improvements include:

- Decompression: ~2 times faster at all levels

- Compression: ~3 times faster at equivalent size, up to ~5 times at level 1 (with a larger file size)

- CRC: 10 to 100 times faster, using hardware CRC instructions

Note: Python for Windows gets the improvement first because it’s distributed as precompiled binaries. On other operating systems, Python is distributed as source code, so you will have to rely on upstream maintainers (Linux distributions, conda, uv Python) to build Python with zlib-ng.

A 28-year-old bug, fixed

Additionally, I identified and fixed the default compression level in the Python interpreter which has been sub-optimal for 28 years! This fix will ship with Python 3.15.0 in late 2026. View the PR here

- Compression functions take a level between 1 to 9, the default is 6 for gzip compression.

- Python gzip and tarfile modules have been compressing at level 9 by default, resulting in much slower compression for limited space saving.

- All code which used gzip.open(write/append) tarfile.open(write/append) gzip.compress(), without the compresslevel argument, is affected.

- I first came across this bug when working with tornado/jupyter in 2016, they served HTTP content at ~ 1 MB/s with extreme latency spikes, due to compressing with the Python gzip module.

- This performance issue dates to the very first commit that added compression to Python in April 1997.

- This may be one of the longest standing bugs in a common code path in a core library.

Now let’s dive into compression libraries.

zlib History: When "Stable" Means "Unmaintained"

zlib is the reference implementation of the DEFLATE compression algorithm (RFC 1951), used by zip, gzip, tar.gz, PNG images, HTTP and countless other formats. It is arguably the most used library in the world.

zlib is the ultimate xkcd 2347. For decades, this foundational library has been essentially written and maintained by a single person.

Recognizing the importance of the compression library, the community created several forks about 10 years ago (zlib-cloudflare, zlib-chromium, zlib-ng, zlib-intel, etc…) each shipping fixes and improvements, starting with tiny tweaks that made the algorithm faster.

zlib-ng was designed from the start as a drop-in replacement for zlib with ZLIB_COMPAT=1, or it can be used as a separate library exposing different function names.

zlib-ng is now mature and emerging as the new standard:

- Fedora 40 switched to zlib-ng in place of zlib (Fedora is the testing ground for CentOS and RHEL)

- Python 3.14 switched to zlib-ng in place of zlib

That makes Python the second major ecosystem to switch, hopefully more will follow soon.

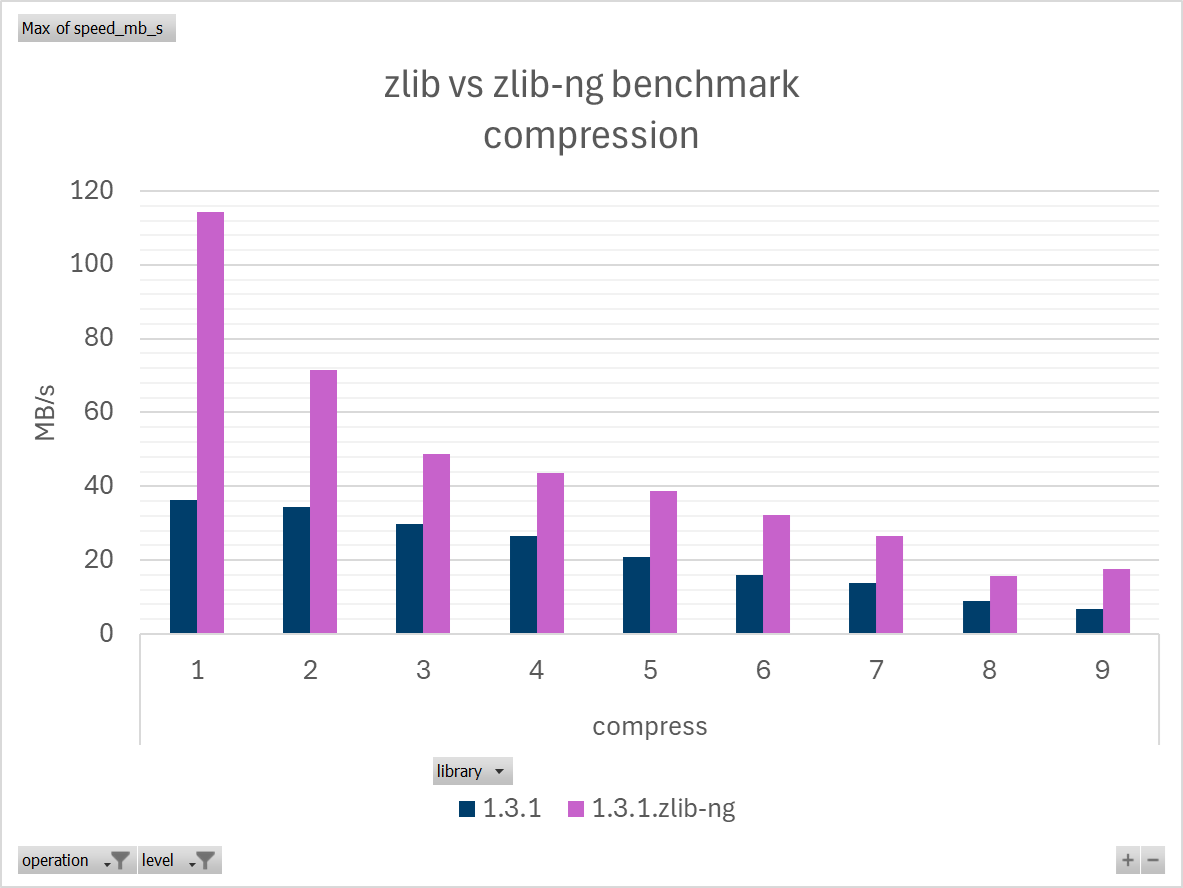

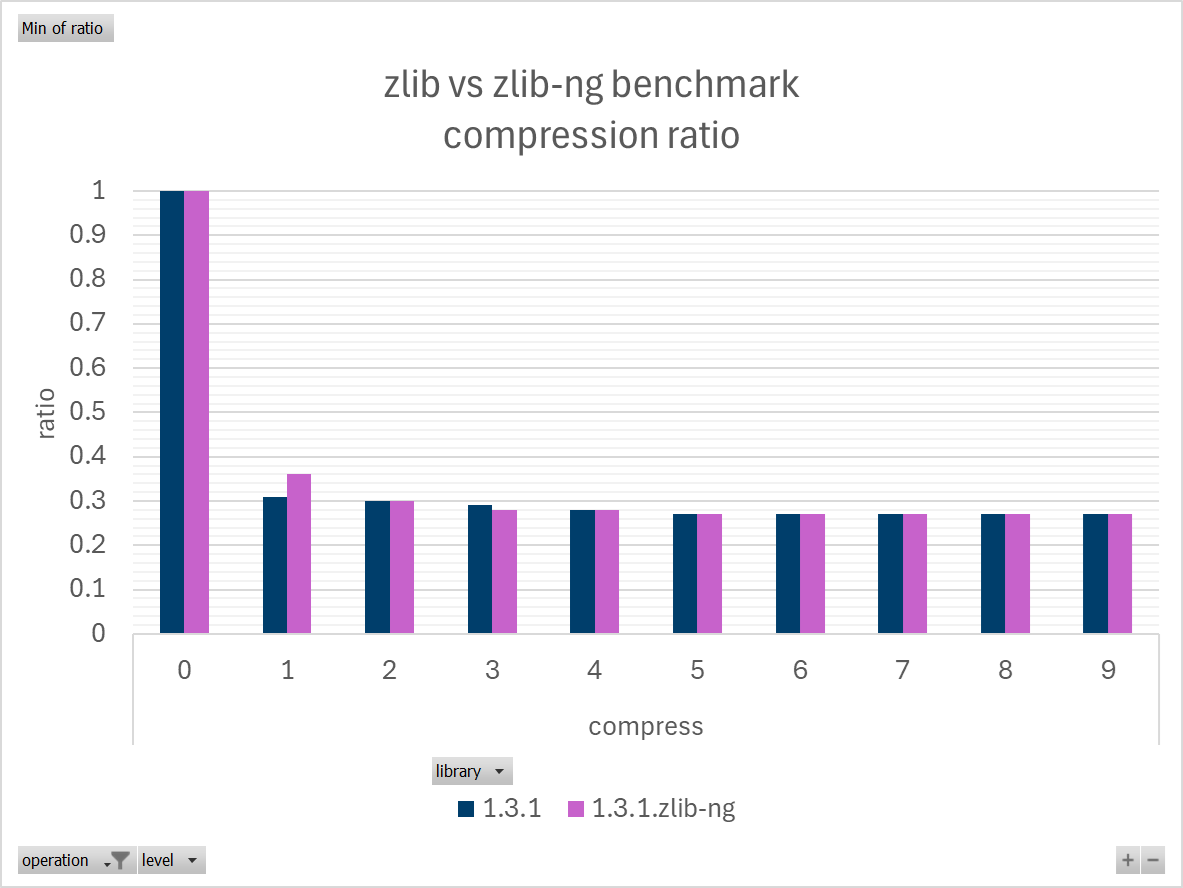

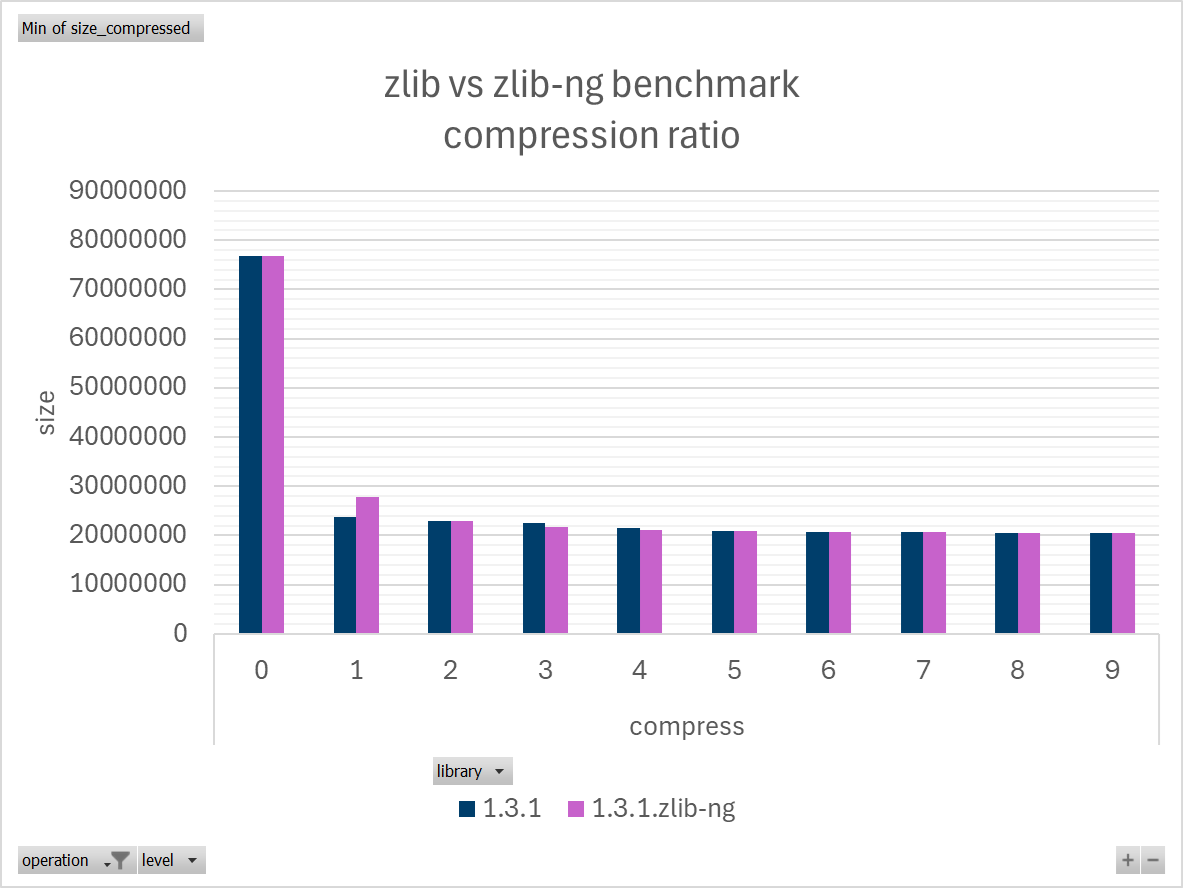

Below are a few benchmarks to illustrate the performance improvement.

The metrics were captured using the NumPy source package, numpy-2.4.0.tar, 73MB, on a Windows laptop in battery saving mode (turbo boost off to reduce variance), and represent the best result out of three runs, running code from the Python 3.14 branch shortly before and after the switch.

Compression Performance: Breaking Free from the MB/s Bottleneck

The performance of classic deflate compression has been a long-standing problem, historically stuck at single-digit MB/s speeds depending on the CPU. It’s particularly problematic when dealing with gigabytes of data (backup, container images, packages), deflate compression can add whole minutes to operations that otherwise take seconds.

For this reason, it's been standard practice to set `compression level=1` in a lot of places to alleviate the poor performance of deflate compression, as there is little difference in size between zlib levels, but performance drop is significant with each level. Even at the fastest level 1, zlib compression usually caps well below 100 MB/s (vary with CPU) which is slower than many disks (SSD) and many wired networks (datacentre).

Source: Internal Man Group performance testing

Source: Internal Man Group performance testing

Source: Internal Man Group performance testing

zlib-ng delivers significant improvements:

- zlib-ng level 1 is an ultra-fast level intended to provide maximum speed with less compression. It’s 3 times faster than zlib at level 1 (varying with data) but the file is larger.

- zlib-ng level 2 is a medium speed level, offering nearly all the compression deflate has to offer while using as little CPU as possible. It’s 2 times faster than zlib level 2 to achieve the same file size (both 0.30 ratio).

- All levels between 3 to 9 on both libraries are within 0.27 to 0.29 compression ratio, the size difference is a rounding error, however the performance drops with each level.

- zlib-ng level 3 matches the same file size (0.28 ratio) as higher zlib levels, while exceeding performance.

Overall, zlib-ng compression is twice as fast as zlib at each level, or approximately 3 times as fast when comparing equivalent output size.

If you were accustomed to setting ‘level=1’ everywhere like me, you may need to adjust your habit to set ‘level=2’ to keep some compression.

As a rule of thumb, zlibg-ng level 1 can break the 100 MB/s barrier. Use level 1 for absolute performance, use level 2 (or 3) when file size or disk speed or network bandwidth is a greater concern.

The new ultra-fast behaviour will give a new life to legacy applications only supporting deflate compression. For example, with Spark: Spark serializes gigabytes of data between nodes with the only options to use deflate or no compression, using zlib compression at level 1 is too slow (waiting on CPU for minutes), disabling compression is too heavy on the network (sending three times the amount of data). Our spark usage will benefit greatly from zlib-ng.

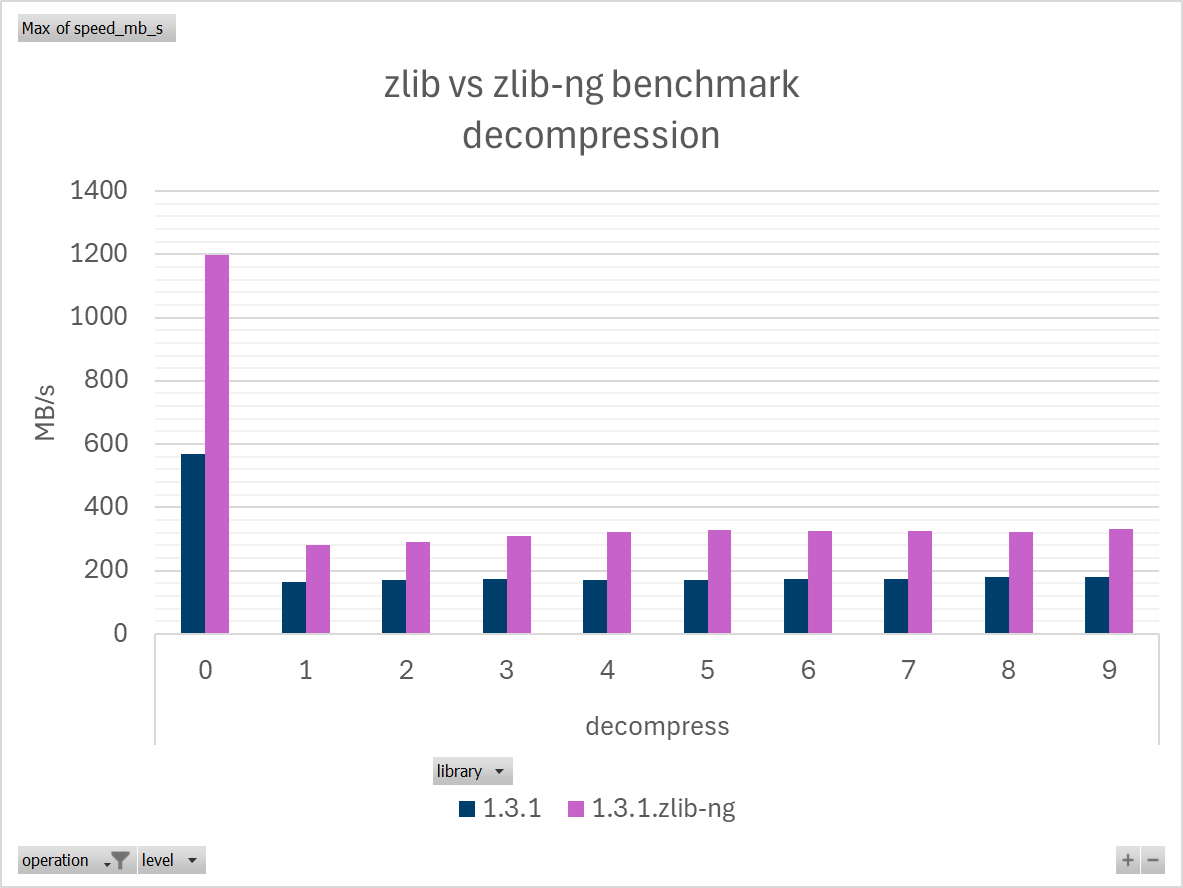

Decompression Performance: Speed Where It Matters Most

The chart below is testing decompression speed with data compressed at each level. Performance is similar for all inputs, as expected from deflate.

Noteworthy: It’s surprising that there is so much difference at level 0 (compression disabled) which should be a no-op.

Source: Internal Man Group performance testing

zlib-ng delivers significant improvements:

- zlib-ng decompresses about twice as fast as zlib at each level

- default level 6 on the chart: 326 MB/s zlib-ng vs 173 MB/s zlib (1.88 speedup)

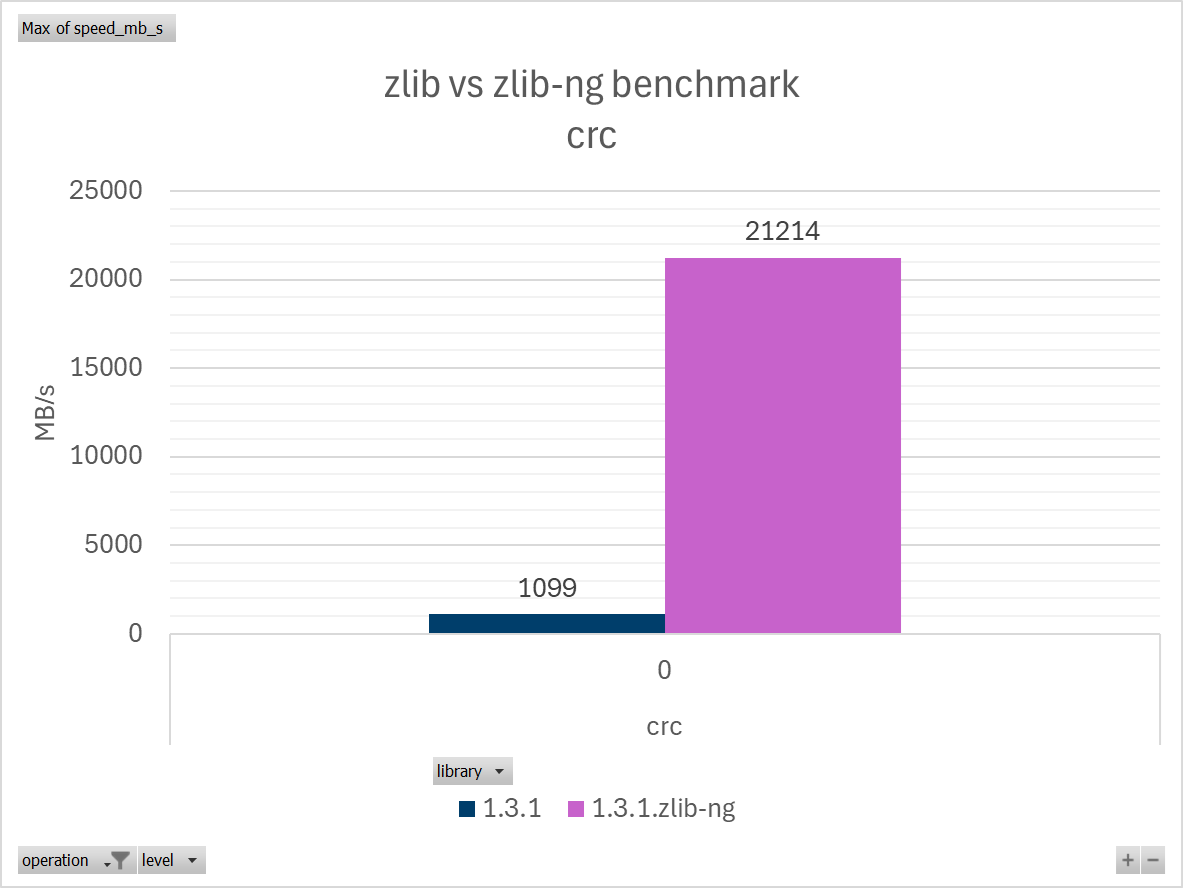

CRC Performance: The Hidden Time Sink

Now comes the real improvement, CRC performance is orders of magnitude faster with zlib-ng.

When I was looking to improve the performance of pip, I discovered 5% to 10% of pip install is calculating CRC.

CRC is a sufficiently common operation that CPUs have instructions to compute the CRC. The instructions started shipping with Nehalem CPUs in 2008 and have been standardized in the SSE 4.2 instructions set.

Source: Internal Man Group performance testing

Hardware instructions make the checksum effectively free.

I observed as much as 600 GB/s on a desktop (AMD 7950X) for small data that can fit in CPU cache. It’s mind blowing!

Another potential target for optimisation.

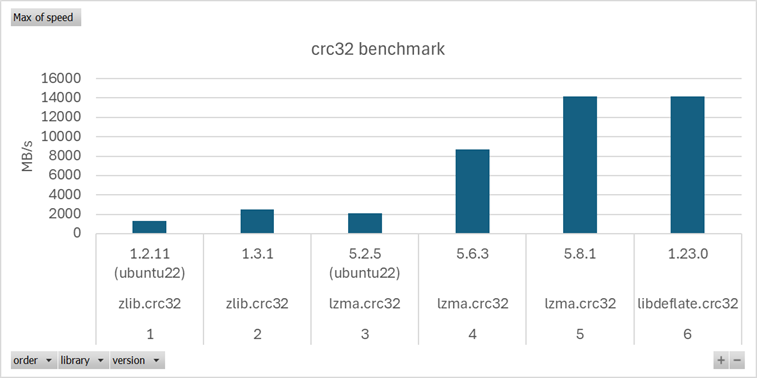

Meanwhile in Linux Land: The Tale of Two CRC Implementations

LZMA is used to compress packages on some Linux distributions (tar.xz files). It offers stronger compression than deflate though at a much slower speed.

Both LZMA and zlib use the same CRC algorithm, making it possible to compare both CRC implementations, from libzlib and liblzma (xz-utils package).

Since Python ships zlib and lzma modules out of the box. It’s straightforward to swap the libraries on a test machine and measure the difference.

Note: Libdeflate is another contender for a faster deflate library, it was the fastest library by a landslide not long ago. Libdeflate is not a drop-in replacement, it can only compress/decompress a whole object in memory whereas zlib provides streaming APIs.

Source: GitHub

liblzma is using optimized CRC instructions in recent versions, same as zlib-ng and libdeflate.

zlib is not using optimized CRC instructions.

Thankfully, as Python ships with both, it has access to both implementations and could pick the faster one. PR to the python interpreter to expose both CRC functions: https://github.com/python/cpython/pull/131721

We've had CPUs with optimized instructions for CRC for 15 years, but software has not kept up with the hardware.

Related: Microsoft Windows 11 requires a CPU with SSE4.2 support, which includes the required instructions for faster CRC https://learn.microsoft.com/en-us/windows-hardware/design/minimum/minimum-hardware-requirements-overview

Linux distributions are in active discussions about whether to support x64-v2 (2008) or x64-v3 (2015) instruction set as the minimum CPU baseline.

Conclusion

While battle-tested software has stood the test of time: proven, tested and reliable. It’s important to reevaluate the constraints of our platforms, particularly as technology improvements have enabled out-the-box optimisation opportunities.

Massive thanks to the zlib-ng maintainers and the Python maintainers who helped to integrate the change.

This work highlights the significant improvements that zlib-ng can bring.

The integration into Python for Windows is merely the beginning.

I hope this encourages Linux distributions (RHEL, Debian, Ubuntu) to switch to zlib-ng.

You are now leaving Man Group’s website

You are leaving Man Group’s website and entering a third-party website that is not controlled, maintained, or monitored by Man Group. Man Group is not responsible for the content or availability of the third-party website. By leaving Man Group’s website, you will be subject to the third-party website’s terms, policies and/or notices, including those related to privacy and security, as applicable.