Introduction

At Man Group, we recognise that Responsible Investment ('RI') is fundamental to our fiduciary duty to our clients and beneficiaries. We also understand the importance of sound stewardship in managing investors’ capital.

Translating this understanding from a firm-wide belief to firm-wide practice requires more than an intellectual grasp of the issues at hand. Man Group’s commitment to RI therefore demanded the creation of a firm-wide platform to support investment decision making and risk management. This article is the story of how we created this platform to ensure that our Investment and Sales teams have the necessary ESG data and reporting capability to bolster and support client relationships. The ESG Analytics Tool allows us to demonstrate to our clients that their values are represented in their portfolios.

Getting started

There were three clear objectives set by Man’s RI committee:

- Organise the complexity of ESG data for investment teams and our clients. This includes data sets from various external providers and stewardship information, as well as internal proprietary ESG data;

- Allow for measurement and management across asset classes, from single stocks to portfolios, showing financial and non-financial data side by side;

- Provide a uniform approach to reporting for the whole firm.

Data First!

The use of data has always been central to what we do, but as any data-driven organisation knows, the insights that can be gained by leveraging datasets in isolation are of limited use. The real power comes when you bring data together. By combining and enriching multiple datasets into a coherent whole, you can gain far greater insights than you could otherwise have done.

This joined-up approach to data is not new to Man Group. For many years, we have benefited from a powerful analytics engine in the form of ROSA. This in-house developed system brings together a wealth of data and analytics relating to our funds and positions. It combines data generated internally with datasets sourced from a wide range of third-party data providers, interrogated via an intuitive, bespoke query tool.

As the heart of our position, P&L and risk management, ROSA is constantly updated, designed for speed and stability, not reporting. To complement ROSA, we identified the need for a separate centralised data repository, one that could be used to service ESG analytics and, in time, all our client reporting needs.

This new data repository, Cortex, is the foundation of the ESG analytics tool and will become the source of all data used for client reporting and internal access to fund performance. Remaining tightly integrated with ROSA, it is flexible enough to easily onboard new datasets and agnostic as to which visualisation tools are used to interact with it.

Many of the challenges we could have faced building Cortex, like relationships between datasets, a framework for adding additional datasets, or the ability to manage benchmark links to funds, had already been solved by ROSA. Our ability to leverage this sped up our development work and built confidence in the final product.

We also had the advantage of an existing set of ESG reports that provided valuable insights but didn’t adhere to Man’s design principles. We were able to take elements of the old reports, enhance their performance and scalability, ensure integrated security was baked into the design, and then generate visually rich and consistent dashboards.

Designing for the Future

The design and development of Cortex and the ESG analytics tool was done in parallel, led by Man’s Business Intelligence (BI) Development team and Infrastructure team within Trading Platform and Core Technology.

The biggest design choice we faced was the overall architecture of both Cortex and the ESG analytics tool. Specifically, whether we should host them on our own hardware in our own datacentres, or, utilise a cloud provider to host them for us. Traditionally we have opted to host internally developed systems ourselves. We have the expertise and infrastructure to do this, along with the supporting processes to automate the build, deployment, and monitoring. The cloud route, on the other hand, would need a new skillset and the development of an equivalent set of processes to support build, deployment and monitoring.

Despite the learning curve, there were three compelling reasons to opt for a cloud-based solution and the decision was made to use Microsoft Azure SQL Database for Cortex, and Tableau Online for the ESG reports.

- Supportability: with both the hardware and software managed by Microsoft and Tableau respectively, we were freed from having to worry about hardware upgrades or obsolescence;

- Scalability: Cortex and the ESG reporting tool needed the ability to scale quickly and easily. This need could be from additional datasets, an increase in compute power to improve data processing times or the creation of new dashboards. Using a hosted option meant we could more easily do that without impacting availability;

- Performance: with a global business and client base we need to ensure the same levels of performance, regardless of where the reports are being accessed from. Azure’s geo-replication functionality, allows multiple synchronised database instances to be hosted close to the demand, greatly reducing latency compared to hosting in a single location and ensuring a consistent experience.

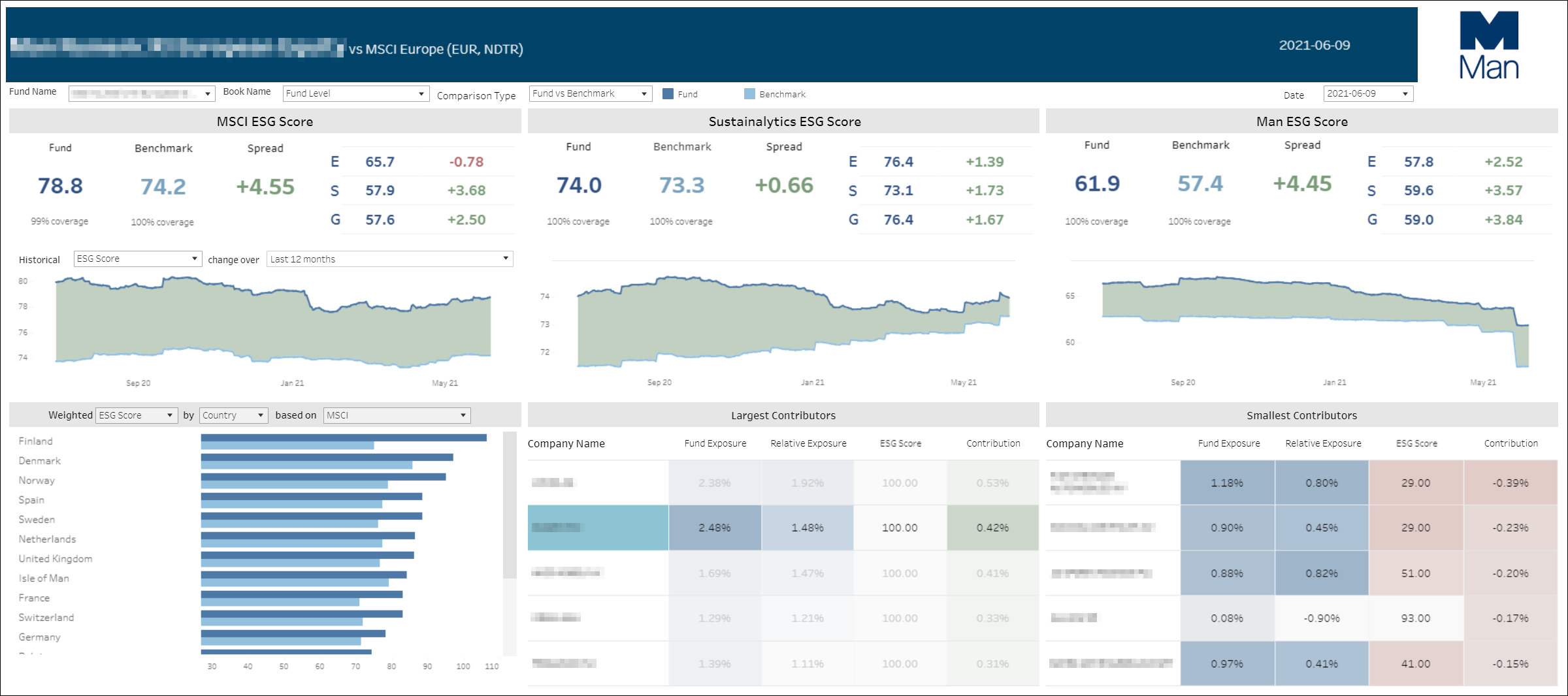

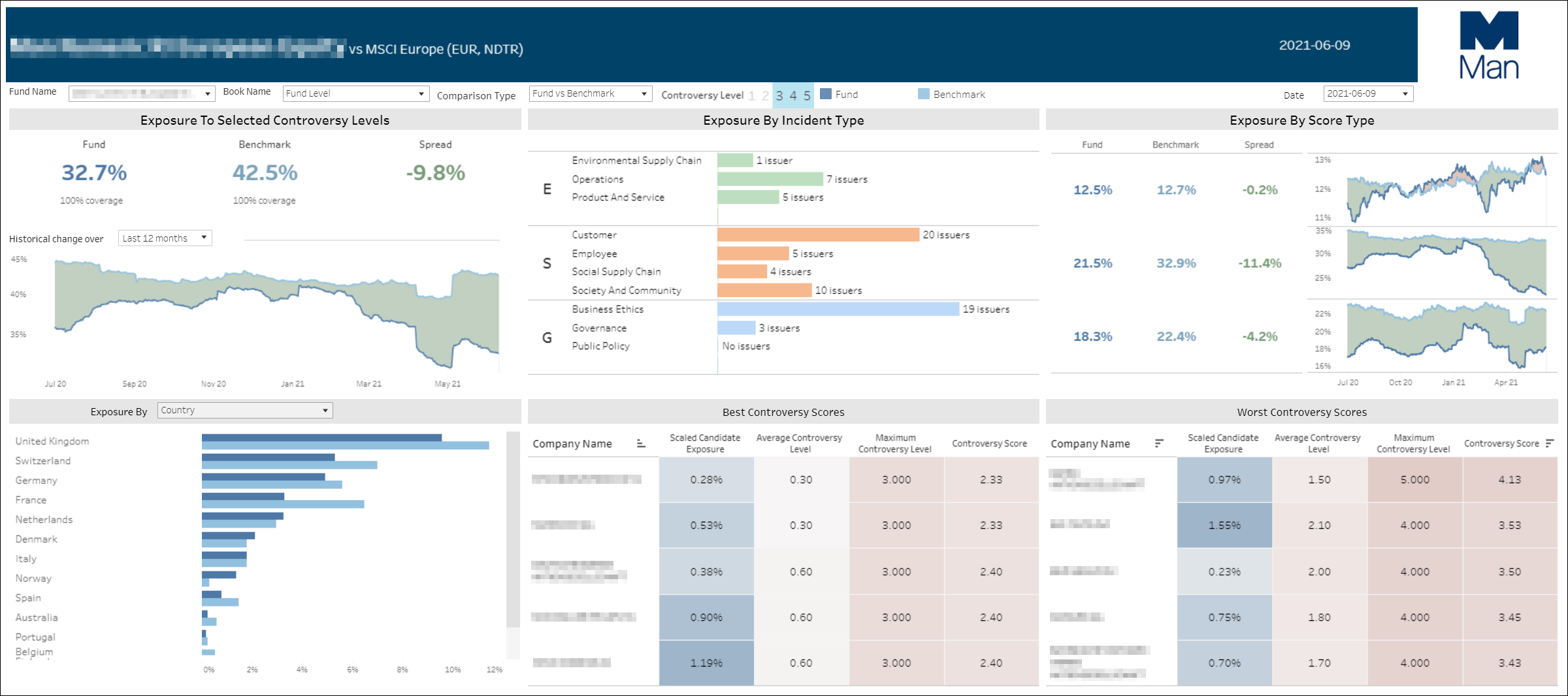

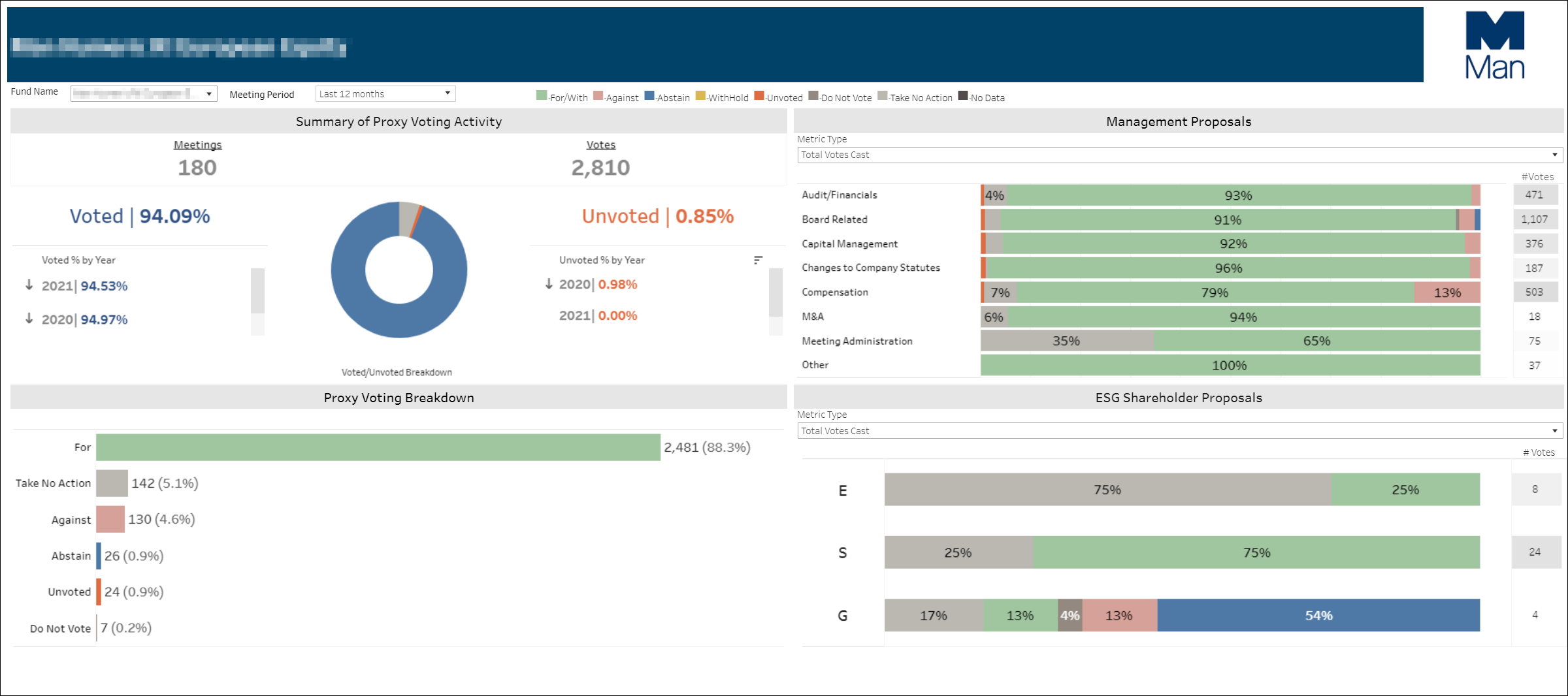

Example screens from the ESG Analytics tool

New Challenges

As the first in-house developed solution that wasn’t hosted on-premises, both the Development and Infrastructure teams involved faced several challenges specific to a cloud deployment.

1. Automated deployment:

Our on-premises database changes are done using Octopus to deploy a DACPAC (a file encapsulating the database physical model). However, since Azure SQL Database does not have the concept of a server onto which we could deploy directly, this approach wouldn’t work. To get round this we configured an on-premises server to allow outbound traffic to Microsoft Azure via our proxy. We were then able to use Octopus to run commands on Azure from this server which facilitated the deployments of the DACPACs.

We also faced the challenge of how to automate the deployment of Azure resources, such as a new database instance. The solution here is a reusable Terraform job, which generates the code to deploy resources onto Azure when requested.

Similarly, we had to find an automated way to deploy dashboards to Tableau online. Our solution is a combination of Tableau’s tabcmd utility and PowerShell, executed via Octopus. This enabled us to target dashboard deployments to multiple Tableau folders, providing the flexibility to deploy client specific dashboards should we need to.

2. Security

Octopus was also used to manage the creation of user accounts in an automated manner. Access to the ESG dashboards was granted via internal Active Directory groups which were manifested on the Azure SQL side automatically. This ensured that individuals had the right level of permissions without the risk and overhead of manually maintaining them.

3. Monitoring

We wanted to have parity with the monitoring that we have available on our in-house servers. For the Azure SQL resources, this is done using checkmk, while we use Nagios checks and underlying stored procedures to monitor the data quality.

4. Connectivity

Man Group’s firewall was configured to allow us to make connections to specific external IP addresses for the Azure SQL instances. However, these external Azure IP addresses sat behind a load balancer and kept changing. This resulted in intermittent data load and connectivity issues during testing. Working with Microsoft, we overcame this issue by utilising Azure Private Link, configuring a connection from a fixed internal IP address to Azure SQL.

Old Friends

As vital as Cortex is, end-users are only able to see the reports that allow them to interrogate and visualise the data. Our aim was to make this experience as elegant, intuitive and performant as possible.

We were already familiar with Tableau as an incredibly powerful and flexible visualisation tool used extensively internally and as part of our sales process. Because of our familiarity and that of our clients, Tableau was the natural choice for the ESG analytics tool. As well as the extensive skills and experience available in our in-house team, we had an existing relationship with Metasite, an external consultancy who also choose Tableau to complement their expertise in UX and UI design.

Starting with basic wireframe designs, our developers and Metasite maintained a consistent look and feel, aligned with Man’s corporate branding. At every stage we involved our key stakeholders, those individuals who know the data and how it could be used. We used an iterative approach of regular show and tell sessions, quickly incorporating feedback.

With development of Cortex and the ESG reporting tool being tightly integrated, this approach also meant that we were able to align the underlying data model and report interaction, ensuring that performance remained a key consideration.

Conclusion: What’s Next?

The creation of the platform represents a huge step forward in RI at Man Group. With an ESG analytics tool that meets its objectives of robust, flexible, trusted data and intuitive, performant reports, users are able to gain a much better understanding of the ESG dynamics on client holdings. The tool has also been received very positively by clients and is the first step towards our vision to create a centralised data repository to service all of Man Group’s client reporting.

Our next challenge is a set of Tableau dashboards targeted at a subset of our clients, leveraging more of ROSA’s capabilities, providing rich position and risk analytics. Taking their design cue from the ESG analytic tools, we have already onboarded our first client and are actively working on rolling the platform out to others. As a replacement for an existing third-party solution, we can be more responsive to changing client priorities.

We are also partnering with Seismic, a cloud-hosted sales and marketing content management solution that provides a powerful tool for dynamically updating factsheets, brochures, and marketing materials. These materials will also rely on Cortex data, ensuring that we provide automated and consistent materials from the same central, approved set of data.

We have built a scalable data repository flexible enough to easily onboard new data sets, both for RI and more widely. We can also take advantage of ROSA’s rich functionality and provide multiple options for interrogating and understanding our data. We’re excited about the opportunities that this presents for both internal insights on our data and on the accessibility and transparency that it provides to our clients.

You are now leaving Man Group’s website

You are leaving Man Group’s website and entering a third-party website that is not controlled, maintained, or monitored by Man Group. Man Group is not responsible for the content or availability of the third-party website. By leaving Man Group’s website, you will be subject to the third-party website’s terms, policies and/or notices, including those related to privacy and security, as applicable.